Purpose of this parameter is to skip the import of a table or a table partition with index in UNUSABLE state. Which means the table should be there in the database with an index in unusable state. This will reduce the unnecessary time for index creation which is in unusable state during the import. By default value for this parameter is ‘Y’. It will come into action when its value is N.

Example on Data Pump EXPDP IMPDP Parfile Option

Advantages of using parfile expdp impdp

- You can hide the passwords from the command prompt by putting the password in the parfile. Otherwise if you mention the password in the command prompt, it will show up in the ps –ef command.

- The length of the command which you can type in the Operating system command line is limited. You cannot type a very lengthy expdp / impdp command with more number of arguments. In this situation you can create a parameter file(parfile) and run with the datapump.

How to Schedule / Execute / Run an Oracle Procedure through DBMS_JOB?

1. Create a PLSQL procedure

SQL>

create or replace procedure pr_dtl (a in varchar2) is

begin

dbms_output.put_line('Name:'||a);

end;

/

Procedure created.

Check Who is Accessing / Locking / Blocking the Oracle Session / Table

If the table is locked by some session in the database you cannot create index or make any changes to that particular table. You will end up with “ORA-00054 resource busy and acquired with NOWAIT specified” error message. You can find out which session is locking your targeted table by using following queries. You may have to kill (in case not needed) the session by following queries.

How to Run / Schedule a PLSQL Procedure In Shell Script and Crontab?

- Create a PLSQL procedure

SQL>

create or replace procedure pr_dtl (a in varchar2) is

begin

dbms_output.put_line('Name:'||a);

end;

/

Procedure created.

How to Keep / Pin / Check PLSQL Objects in Buffer Cache / Memory Using DBMS_SHARED_POOL.KEEP

It will take time to load huge packages to the memory because the smaller objects needs to be moved out of shared pool to make a room for the new big plsql object. To avoid these kind of delays you can keep the PLSQL codes like procedure, function, etc permanently using the DBMS_SHARED_POOL system package. This package allows you to keep the sequences also in the shared pool which will avoid the sequence number missing situation.

Find / Check Hot/Frequently Accessed Objects in Oracle 10g 11g Database Using v$db_object_cache

V$DB_OBJECT_CACHE gives the details of database objects in the shared pool. It includes all objects like tables, indexes, plsql objects-procedure, function, package and etc. The execution column provides you the number of execution for each object.

Below mentioned EXAMPLE provide you the object type wise details of the object under APPUSER.

How to Set / Fix / Limit the Oracle 10g 11g Trace File Size

The size of the trace file in Oracle determined by the parameter is max_dump_file_size. If you set the value of this parameter to UNLIMITED the trace file will occupy the total available size in the file system if it is required. You can limit the value in session or system level by changing the value of the max_dump_file_size.

Script to Generate and Spool DDL / SQL statements for Tablespaces in Oracle Database

As a DBA sometimes we need to spool the scripts for the tablespace for creating the new databases. For that you can use DBMS_METADATA package to extract the DDL script for the tablespace easily.

Below mentioned script you can use for the same purpose.

Script to generate all tablespaces.

Syntax to Find / Check / Change / Set Default Permanent Tablespace for Oracle 10g, 11g Database

Default Permanent tablespace Syntax

SQL> create tablespace default_tbs datafile '/u01/app/oracle/oradata/dbrepoinf/default_tbs.dbf' size 100m;

Tablespace created.

SQL> alter database default tablespace default_tbs;

Different methods to find out NLS Parameters from Oracle Database

- Using SHOW PARAMETER

Connect / as sysdba

SQL> show parameter nls_

NAME TYPE VALUE

------------------------------------ ----------- -------------

nls_language string AMERICAN

nls_territory string AMERICA

How to find / Set NLS_LANG in Solaris AIX Unix and Windows for Oracle?

What is NLS_LANG Parameter?

It is an environment parameter to specify the local behavior for the oracle software. The formatting of the date, number, time and currency parameter are changes depending on the regions, country. This parameter sets the language and territory used by the application and the oracle database server. NLS_LANG is a local environment variable for the UNIX platforms like Solaris, AIX and Linux. For windows it has to be set in registry or it can be set locally also.

Syntax to Find / Check / Change / Set Default Temporary Tablespace for Oracle 10g, 11g Database

Default Temporary tablespace Syntax

SQL> create temporary tablespace temp_tbs tempfile '/u01/app/oracle/oradata/qa01/temp_tbs_01' size 100m;

Tablespace created.

SQL> alter database default temporary tablespace temp_tbs;

Oracle 11g User Account Status LOCKED(TIMED) with FAILED_LOGIN_ATTEMPTS, PASSWORD_LOCK_TIME Parameters

At least few times you might have noticed the account status is with LOCKED(TIMED). In this topic we will discuss about how it is happens.

See one example here.

SQL> SELECT RESOURCE_NAME,LIMIT FROM DBA_PROFILES

2 WHERE PROFILE='DEFAULT' AND RESOURCE_NAME IN ('FAILED_LOGIN_ATTEMPTS','PASSWORD_LOCK_TIME');

RESOURCE_NAME LIMIT

-------------------------------- ----------------

FAILED_LOGIN_ATTEMPTS 1

PASSWORD_LOCK_TIME 1

Data Pump EXPDP FLASHBACK_SCN Parameter

In normal export utility (EXP) we have consistent=y option to ensure the data consistency of the dump. For Data Pump there is no consistent parameter whereas it has FLASHBACK_SCN and FLASHBACK_TIME parameters to achieve this goal. These two parameters are mutually exclusive. In FLASHBACK_SCN you have to pass the SCN number as the argument and in FLASHBACK_TIME you have to pass timestamp value.

IMPDP FLASHBACK_SCN, FLASHBACK_TIME Shows in Parameter Help – Is it a bug?

Impdp help=y shows FLASHBACK_SCN and FLASHBACK_TIME options in the list of parameters. But, when you try to import a dump with flashback_scn it says

ORA-39005: inconsistent arguments

ORA-39208: Parameter FLASHBACK_SCN is invalid for IMPORT jobs.

Something interesting!! J

impdp help=y

Troubleshoot Fix error IMP-00038: Could not convert to environment character set's handle

Problem Description:

Import fails with

IMP-00038: Could not convert to environment character set's handle

IMP-00000: Import terminated unsuccessfully

Solution Description:

- In most of the cases this error occurs when you take an export using

Troubleshoot Fix IMP-00010 not a valid export file header failed verification

Problem Description:

Import (IMP) fails with IMP-00010 not a valid export file header failed verification

IMP-00000: Import terminated unsuccessfully

00010, 00000, "not a valid export file, header failed verification"

// *Cause: Either the file was not generated by Export or it was corrupted.

Why Adsense Ad Slots are Blank / Empty – Main Reasons

Here I wanted to explain about the major reasons for the blank Adsense ad slot in your pages.

- More number of ad slots in page

As per Adsense policies you are allowed to place 3 image, 3 link and 2 search ads per pages. If you allocate more number of ad slots than allowed slots your page end up with blank ad slots. Moreover it is kind of Adsense policy violation.

Data Pump EXPDP IMPDP Status Parameter for Feedback

In conventional exp / imp we have feedback parameter to see the progress of each table’s export. If you give feedback=1000 then in the output it will show a dot ‘.’ For each and every thousand number of rows. In the data pump does not have the feedback option whereas it has STATUS option available to report the status of the export or import activity in the seconds mentioned as the parameter value.

Data Pump IMPDP REMAP_TABLESPACE Parameter Syntax and Examples

Purpose of this option is to import the objects mentioned in the source tablespace will be imported into the target tablespace. REMAP_TABLESPACE is a good option and it is very useful when the target database does not have the same set of tablespaces. Also, if you wanted to import the dump content to another tablespace which is having more free space then this option is helpful.

Data Pump IMPDP REMAP_SCHEMA Parameter Syntax and Examples

In conventional import (IMP) utility we have fromuser and touser parameter to import the data dump which is taken from one user to another user. In Data Pump impdp oracle introduced new parameter called REMAP_SCHEMA.

Syntax:

impdp REMAP_SCHEMA=source:target where source and target are the schema names.

SQL Syntax to Add Offset Hour, Minute, Year, Day, Month to Oracle Timestamp Values Examples

Syntax to add Hour, Minute, Second, Year, Day and Month to the TIMESTAMP value is

timestampvalue + INTERVAL ‘<value to be added>’ TYPE

where TYPE can be

YEAR,

MONTH,

DAY,

HOUR,

Difference between LOG_ARCHIVE_DEST_1 LOG_ARCHIVE_DEST and LOG_ARCHIVE_DUPLEX_DEST

Oracle database can run either in archivelog mode or non archivelog mode. All these parameters (LOG_ARCHIVE_DEST_1 LOG_ARCHIVE_DEST and LOG_ARCHIVE_DUPLEX_DEST) are valid for archivelog mode only.

How to check whether the database is running on Archivelog Mode?

Connect to sqlplus with / as sysdba

Troubleshoot Fix ORA-16018 ORA-16019 ORA-02097 Oracle Archival Errors

Problem Description:

alter system set log_archive_dest command fails with either

ORA-16018: cannot use LOG_ARCHIVE_DEST with LOG_ARCHIVE_DEST_n or

DB_RECOVERY_FILE_DEST

Or

ORA-16019: cannot use db_recovery_file_dest with LOG_ARCHIVE_DEST or

LOG_ARCHIVE_DUPLEX_DEST

Add Oracle Constraints – Primary, Foreign, Unique, Check, Null to Existing Table

Most of the time the syntax for the constraint addition is confusing one unless you are frequent to the table creation. Here I am giving some examples and syntaxes for the Oracle constraint creations.

- Primary Key

a. With Create Table Command

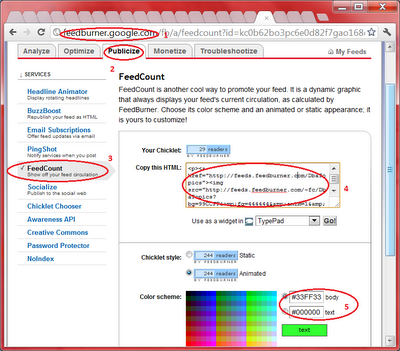

How to Add / Install RSS Feedburner Reader Counter to Blogger?

Feed counter is another way to promote your web blog. This counter will show the current feed’s current circulation. Here is the method to add feedburner counter to the blog.

- Login to http://feedburner.google.com with your account

Oracle 11gR2 LISTAGG Analytical Function – Concatenate / Select Multiple Rows of a Table to Single

This function act as a single set aggregate as well as group set aggregate function. In single set function it returns all rows into a single output row and in group set aggregate function it groups the records as defined in the group by function. As a analytic function it partitions the query result set into groups based on one or more conditions in the query_partition_clause.

How to Check / Find the Oracle Table Depended Objects?

There are two ways to find out the depended objects in Oracle.

First method you can use user_dependencies/ dba_dependencies view

Secondly, you can use deptree table to find out the details.

- Using User_dependencies/DBA_dependencies

Reasons for PL/SQL Code Block Failure with ORA-01001: invalid cursor

Error Description

PL/SQL block (procedure, function, package etc) execution failing with ORA-01001: invalid cursor error message.

Reasons for this error.

- You have declared one cursor and opened with FOR LOOP. In this case you don’t need to specify explicit close for the cursor. By mistake if you mention the close cursor command it will through this error.

Select Failed with ORA-00980: synonym translation is no longer valid

Problem Description:

Select from a synonym is failed with ORA-00980: synonym translation is no longer valid. There are few reasons for this error.

- Check the underlying table is deleted or not. If you still need the table and synonym check the table is available in recycle bin and restore it back.

Create Synonym Failed with ORA-01471: cannot create a synonym with same name as object

Problem Description:

Create synonym command failed with ORA-01471: cannot create a synonym with same name as object.

Error Description:

As the error says you cannot create an object with the same name of an existing object.

Oracle SQL Query to Find out Nth Largest, Highest, Lowest, Smallest Values

It is a common question in interviews to write a query find out nth largest and smallest. Also it is a generic requirement to find out nth values from a group of records.

Query Syntax for Nth Largest

select * from <Table Name> a where &nthLarge =

(select count(distinct <Column Name>)

Troubleshoot Fix unrecoverable error ORA-29701 raised in ASM I/O path; terminating process 17946

Error Description:

In my database alert log I am seeing following error keep on generating periodically. Even though I have enough space in ASM I am not able to resize the datafile, add datafile etc.

ERROR: unrecoverable error ORA-29701 raised in ASM I/O path; terminating process 16956

Interesting Features of Oracle TO_DATE and TRUNC Functions

My current system date is 04-May-2012.

SQL> SELECT SYSDATE FROM DUAL;

SYSDATE

---------

04-MAY-12

When I select to_date('2012', 'YYYY') it gives 1st day of current month.

SQL> select to_date('2012', 'YYYY') from dual;

Oracle SQL Query to Find out Cumulative SUM and Duplicate Rows of a Table Records

- Query to find out Cumulative Sum from the table

Syntax:

SELECT <Column 1>, <Column 2>,

(SELECT SUM(<Column which you need cumulative Sum>)

FROM <Table Name> WHERE ROWID<= A.ROWID) CUMU_SAL

FROM <Table Name> A;

How to Extract / Get Script for DBA_, V$ Oracle Data Dictionary Views?

For DBA_, V$ data dictionary views you can use DBMS_METADATA package to get the source code. See an example for DBA_SYNONYMS dictionary view.

SQL> set pages 1000

SQL> set long 10000

SQL> select dbms_metadata.get_ddl('VIEW','DBA_SYNONYMS') FROM DUAL;

Will IGNORE=Y Option Work with Data Pump IMPDP

In conventional import utility (IMP) we have IGNORE=Y option to ignore the error if the object is already present in the database. If you give the same option in data pump impdp it will consider as the TABLE_EXISTS_ACTION=APPEND. The data from the dump will be appended to the table. See an example below.

Data Pump IMPDP TABLE_EXISTS_ACTION = APPEND, REPLACE, [SKIP] and TRUNCATE

In conventional import utility (IMP) we have ignore =y option which will ignore the error when the object is already exist with the same name. When it comes to the data pump there is one enhanced option of ignore=y which is called TABLE_EXISTS_ACTION. The values for this parameter give 4 different options to handle the existing table and data.

Oracle DBMS_RANDOM Package to Generate Random Numbers, Strings

Generate random decimal value

SQL> select dbms_random.value from dual;

VALUE

----------

.969676006

Generate a random number between 1 and 1000

Generate Random Date between Dates or Period Using Julian Date and DBMS_RANDOM package

What is Julian date format?

In Oracle a date can be formatted into Julian format which will be a number and known as Julian Day. The Julian number will be calculated by counting the number of days from 1st of January 4712 BC to the date.

See examples

Oracle V$ vs RAC GV$ Dynamic Performance Views – Difference and Features

All the objects / views starts with V$ or GV$ are called dynamic performance views. As the name indicates these views are getting updated frequently or it is up-to-date with database statistics and performance statistics. Oracle internally manages this views and no other user has manipulation access on these views. Other users can get read only privilege on these views.

Trick to HIDE / Mask Oracle User Password in Alter User Command?

Generally we give the password in alter user command which will be visible to others. But, in case you don’t want show the password while changing it you can use the accept SQL*PLUS command with hide option.

See the example below.